As enterprises increasingly shift from monolithic to a microservices architecture, IT teams are faced with the problem of effectively orchestrating these microservices. When we have a single application created with a few different containerized services, communication between them can be easily managed. However, enterprise applications with 100s or 1000s of different microservices need a better solution for load balancing, monitoring, traffic routing and security.

Enter the service mesh architecture.

Service Mesh Architecture

A service mesh is an infrastructure layer that manages service-to-service communication, and provides a way to dynamically route, monitor, and secure microservice-based applications.

Previously, the logic governing inter-service communication was coded into each microservice. But that’s not a feasible option when dealing with a large volume of microservices, or scaling applications by adding new services.

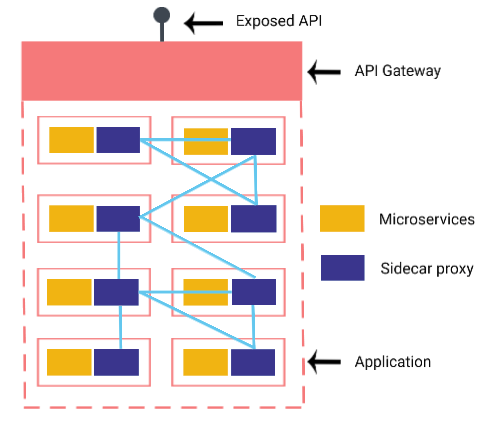

The solution is to have proxies that manage the service-to-service communication, running beside each microservice rather than within it. These are also known as ‘sidecar’ proxies and together they form the abstracted mesh architecture that manages the microservices communication.

Why is this Needed?

The objective with microservices was to build applications as a collection of independent services that can essentially fail without causing system-wide outage. In practice however, most microservice-based applications began operating with direct communication between services. As the application complexity and number of microservices increased, this created greater interdependence between services, thus lowering agility and system resilience.

And hence the complex enterprise applications with a large number of microservices need a service mesh architecture.

Isn't that what APIs did?

Yes, APIs perform a similar function as a service mesh i.e. govern the flow of information. The key difference lies in what kind of communication they govern.

API gateways manage the communication between an application, and others within and outside the enterprise architecture. It provides a single entry point into an application, for requests from all external clients, and handles user-authentication, routing, monitoring and error-handling. It also abstracts the underlying complexity of an application, with its component microservices, from external clients.

A service mesh architecture on the other hand manages the communication between the microservices within an application.

All the proxy sidecars that make up the service mesh are listed in a service registry. Each microservice that wants to request information (client microservice) will have its proxy sidecar look up the registry to find the available proxies associated with the target microservice. It then uses the defined load balancing algorithm to direct its request to the right proxy.

What problems does a service mesh solve?

The service mesh primarily resolves concerns around increasing interdependence that creeps into microservice-based applications as they scale in complexity. Here’s how:

Deploying multiple microservice versions simultaneously

Canary releases, or introducing a new version of a microservice to a select number or type of requests, is a standard way to ease in new feature additions. However, effectively routing requests between old and new versions can be difficult when the logic in coded within each service, because they tend to have interdependencies on other services. Similarly, A/B testing microservice versions also requires dynamic routing capabilities that is best delivered by a service mesh.

The service mesh architecture has the routing rules, and can make the decision to direct source service queries to the right version of the target services. This decoupled communication layer reduces the amount of code written for each microservice, while still better managing inter-service routing logic.

Detailed visibility into inter-service communication

In a complex microservices architecture, it can be difficult to pin-point the exact location of a fault. But once all communication is routed through a service mesh, there is a way to gather logs and performance metrics on all aspects of the microservices. This makes it easier to generate detailed reports and easily trace point of failure.

The logs from the service mesh can also be used to create standardized benchmarks for the application. For example, how long to wait before retrying a service that’s failed. Once these rules are coded into the service mesh, microservices operation becomes optimized as the system doesn’t get overloaded with unnecessary pings to a failed downstream service before the requisite time-out period.

Microservice testing

Testing each microservice in isolation is critical to ensure application resilience. There are also instances where you need to test service behaviour when faults are introduced in downstream services. And that’s difficult and risky to do if we are forcing those faults to actually occur in the services.

The service mesh is the perfect way to simulate these faults in the systems and study the associated response.

Fault Tolerance

Resilience is a key reason why microservices architecture is preferred, and elements like circuit breakers, load balancing, rate-limiting and timeouts are what makes this possible. These rules are usually coded into each microservice , thus increasing complexity in the system, besides being time consuming to create.

Once again, the service mesh can be used to improve fault tolerance by taking these functionalities out of the microservices and adding them to the mesh. These can be implemented via a set of rules that will govern all microservices within the application, without actually cluttering the microservice implementation.

So that was a quick run down on the service mesh architecture and why it’s becoming a crucial infrastructure requirement for enterprise applications. Following blogs will explore service mesh implementation in depth, and evaluate the various tools like Istio, Linkerd and more for service mesh architecture implementation.

Srijan’s teams have expertise in decoupling monolithic systems with elegant single-responsibility microservices, as well as testing, managing and scaling a microservices architecture.

Looking to modernize legacy systems? Drop us a line and our enterprise architecture experts will be in touch.

Our Services

Customer Experience Management

- Content Management

- Marketing Automation

- Mobile Application Development

- Drupal Support and Maintanence

Enterprise Modernization, Platforms & Cloud

- Modernization Strategy

- API Management & Developer Portals

- Hybrid Cloud & Cloud Native Platforms

- Site Reliability Engineering