Strategic IP Information (SIPI), one of the leading online brand protection and content monitoring firms in Asia, was facing some of the biggest challenges:

- Inefficient, expensive processes which created a huge operational and financial strain on SIPI, as they worked towards delivering value for their clients.

- Inability to scale up and serve more clients, to better serve their existing clients, without investing more into hiring and training new resources

This was largely owing to their resource insensitivity, error-prone manual work, and lack of proper reporting. SIPI aimed to break free from these constraints, and Srijan got into the game with the goal of making this happen.

The core solution proposed was Intelligent Process Automation that could automate the bulk of their manual workflow. This solution was designed to automatically scan through hundreds of product listings to accurately spot and log counterfeits, while delivering significant savings in both time and money.

Here's how Srijan helped build the solution:

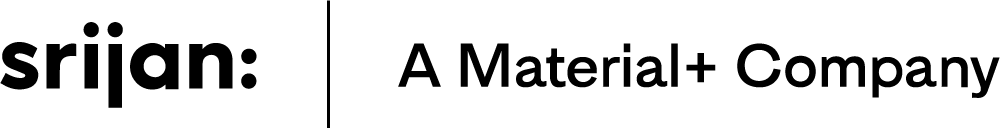

Creating the Crawler Algorithm

Srijan team decided to use a third-party crawler as a base, and customize it to work specifically for the client’s business case. Several third-party crawlers were evaluated - Diffbot, Import.io, 80 Legs, Scrapinghub - on the basis of the scope for configuration, customization, execution capabilities, and interactivity. Weighing all options, the team decided to go ahead with ParseHub, as it fulfilled all the requirements for the project.

Next, the team created a proprietary algorithm that lets the crawler identify suspected counterfeits based on input keywords. The crawler was set to scan once every 24 hours, across 30 marketplaces, for 10 client brands.

The crawler algorithm was capable of:

- Running searches for various combinations of spelling, price, and other factors to look for counterfeit product results, based on keywords fed into the system.

- Converting prices listed in global marketplaces to common currency, for easier comparison

- Sorting all search results into suspect and non-suspect categories for next steps

Automating all of these tasks ensured standardization of process, reduced manual errors, and allowed the clients to significantly scale up their work.

The Reporting Dashboard

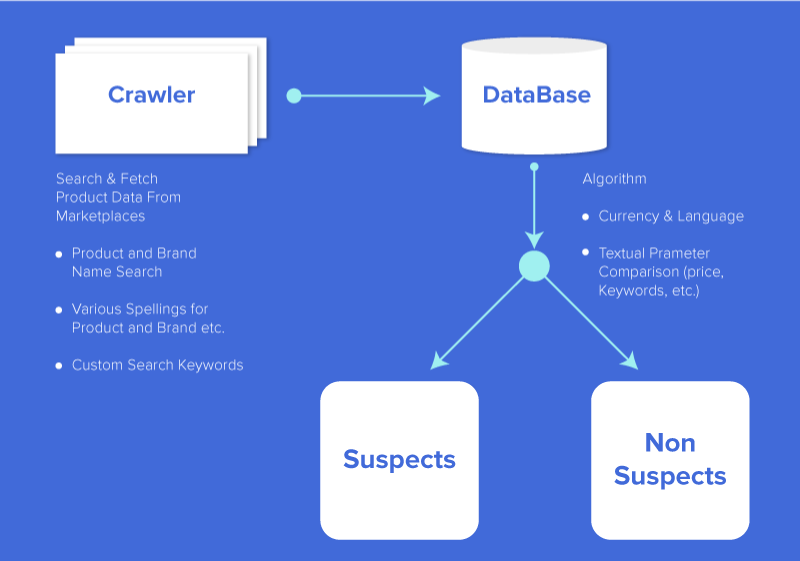

The client wanted to enable the storage of all counterfeit information in a central place that would simplify performing the next steps, and also enable accurate reporting

For this, the team built a dashboard that streamlined data fetched by the crawler. This dashboard allowed:

Logging of all counterfeit products

Adding reasons for why they were tagged as counterfeit,

Marking products as genuine, counterfeit, irrelevant, or ‘mark for client review’

Here’s a quick look:

Besides establishing standard workflows, the dashboard also enabled:

- Exporting the list of confirmed counterfeits to file for take-down

- Marking items/lists as “reported”

- Real-time status view for clients to know work being done at any given point along with crisp, easy-to-understand reports

One of the key challenges during the project was to make the crawler work with all key global marketplaces. Online marketplaces have different parametres worldwide, and there were ones that did not allow any crawler activity. The team was able to counter this by building quick data entry screens which help log any manually found counterfeits on these sites onto the dashboards. Subsequently, they built a browser extension that could send product data to the dashboard at the click of a button.

Curious about the complete solution and value delivered to the client?

Looking to automate tedious manual processes at your enterprise? Let’s do a little brainstorming to see how Srijan can help.

Our Services

Customer Experience Management

- Content Management

- Marketing Automation

- Mobile Application Development

- Drupal Support and Maintanence

Enterprise Modernization, Platforms & Cloud

- Modernization Strategy

- API Management & Developer Portals

- Hybrid Cloud & Cloud Native Platforms

- Site Reliability Engineering