Reporting element in test automation framework plays an important role. The success of a test automation framework and its survival depends on how effectively reporting mechanism is implemented.

To transfer the information from the development team to the customer/business team, a detailed and interactive report of test case is required.

There are various reporting libraries that an automation framework designer can use for the reporting component. One of them is the Extent HTML Report, which provides beautiful, detailed and interactive report of automated tests.

Extent HTML Report

It is an interactive reporting mechanism which can be integrated with a Selenium test automation framework.

There are many features provided by extent report:

- Dashboard - provides detailed and graphical analysis of the project

- Interactive – an interactive HTML format report with a lot of UI widgets.

- Integration Support - can be configured with Java(JUnit , TestNG) and .Net(NUnit) test automation frameworks.

- Detailed Information - provides detailed information of the test cases including the details of the failure test cases.

How to generate Extent Report

#Step 1: Download the Extent Report libraries and add them to your Selenium project. Or add below in pom.xml

<dependency>

<groupId>com.aventstack</groupId>

<artifactId>extentreports</artifactId>

<version>3.1.5</version>

</dependency>

#Step 2: Create a java folder say utils , add another folder - Listeners and Create a class - extentListener that implements ITestListener and add the following code

Package utils.Listeners;

import java.io.IOException;

import org.testng.ITestContext;

import org.testng.ITestListener;

import org.testng.ITestResult;

import com.aventstack.extentreports.ExtentReports;

import com.aventstack.extentreports.ExtentTest;

import com.aventstack.extentreports.Status;

import com.aventstack.extentreports.reporter.*;

import baseFunctions;

public class extentListener extends baseFunctions implements ITestListener {

public static String testName;

ExtentHtmlReporter htmlReporter;

ExtentReports extent;

public static ExtentTest test;

@Override

public void onStart(ITestContext context) {

htmlReporter = new ExtentHtmlReporter(System.getProperty("user.dir") + "/test-output/TestResultsReport.html");

ExtentHtmlReporter htmlReporter = new ExtentHtmlReporter(fileName);

htmlReporter.config().setTestViewChartLocation(ChartLocation.TOP);

htmlReporter.config().setChartVisibilityOnOpen(true);

htmlReporter.config().setTheme(Theme.STANDARD);

htmlReporter.config().setDocumentTitle("XYZ.com");

htmlReporter.config().setEncoding("utf-10");

htmlReporter.config().setReportName("Automation Report")

extent = new ExtentReports();

extent.attachReporter(htmlReporter);

return extent;

}

@Override

public void onFinish(ITestContext context) {

extent.flush();

}

@Override

public void onTestStart(ITestResult result) {

test = extent.createTest(result.getName());

testName = result.getName();

test.log(Status.INFO, result.getName() + " Test has Started");

System.out.println("*******TEST STARTED ******");

}

@Override

public void onTestSuccess(ITestResult result) {

test.log(Status.PASS, result.getName() + " Test Passed Successfully");

}

@Override

public void onTestFailure(ITestResult result) {

test.log(Status.FAIL, result.getName() + " Test is failed" + result.getThrowable());

}

@Override

public void onTestSkipped(ITestResult result) {

test.log(Status.SKIP, result.getName() + " Test is Skipped" + result.getThrowable());

}

@Override

public void onTestFailedButWithinSuccessPercentage(ITestResult result) {

}

}

#Step 3: For every failed assertion , attach screenshots in the report. Add the class SoftAssertionListener in the same utils folder

Package utils.Listeners;

import java.io.IOException;

import java.util.Map;

import org.testng.asserts.IAssert;

import org.testng.asserts.SoftAssert;

import org.testng.collections.Maps;

public class softAssertionListener extends SoftAssert {

private final Map<AssertionError, IAssert<?>> m_errors = Maps.newLinkedHashMap();

@Override

protected void doAssert(IAssert<?> a) {

onBeforeAssert(a);

try {

a.doAssert();

onAssertSuccess(a);

} catch (AssertionError ex) {

onAssertFailure(a, ex);

m_errors.put(ex, a);

try {

extentListener.test.fail("Snapshot below: " + extentListener.test.addScreenCaptureFromPath(baseFunctions.takeScreenShot(userjourney.methodName)));

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

System.out.println("Screenshot taken");

} finally {

onAfterAssert(a);

}

}

public void assertAll() {

if (!m_errors.isEmpty()) {

StringBuilder sb = new StringBuilder("The following asserts failed:"); boolean first = true;

for (Map.Entry<AssertionError, IAssert<?>> ae : m_errors.entrySet()) {

if (first) {

first = false;

} else {

sb.append(",");

}

sb.append("\n\t");

sb.append(ae.getKey().getMessage());

}

throw new AssertionError(sb.toString());

}

}

}

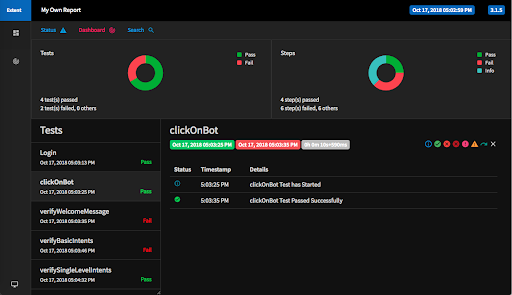

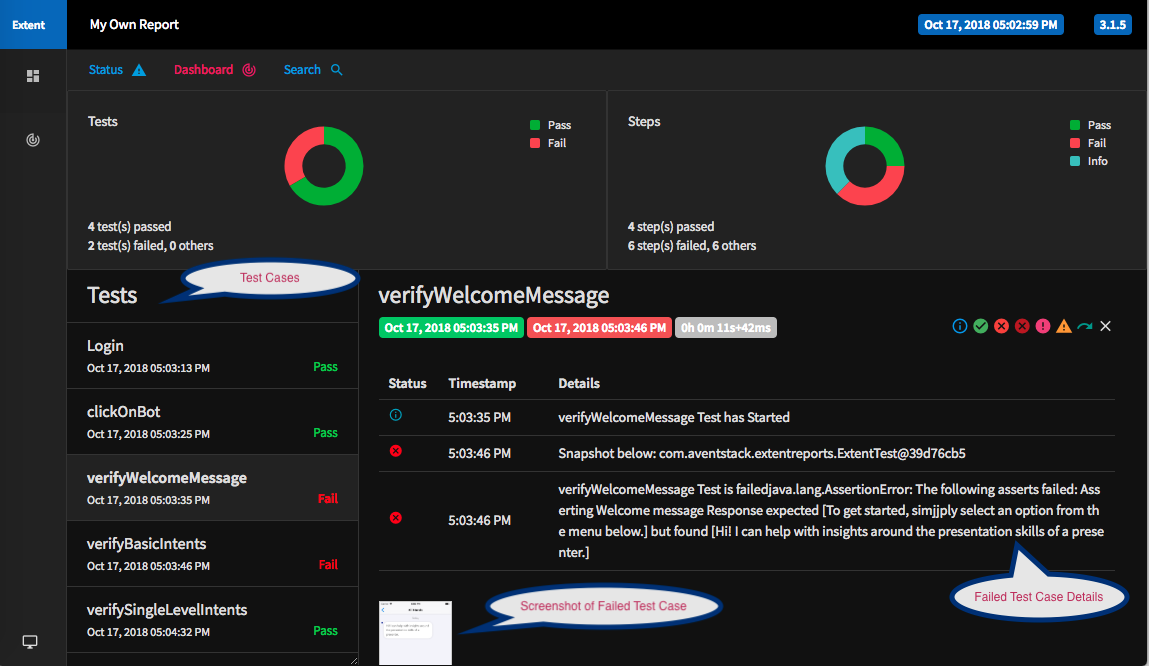

#Step 4: Execute your test cases and visualise your beautiful report

Here’s what the final reports look like:

Pass test case

Failed Test Case - includes screenshots & the failure details

And that’s how you can create interactive and detailed automation test case reports.

Do you have some other tips and tricks for presenting automation test reports? Don’t forget to share them in the comments below.

Our Services

Customer Experience Management

- Content Management

- Marketing Automation

- Mobile Application Development

- Drupal Support and Maintanence

Enterprise Modernization, Platforms & Cloud

- Modernization Strategy

- API Management & Developer Portals

- Hybrid Cloud & Cloud Native Platforms

- Site Reliability Engineering